What makes this technology possible?

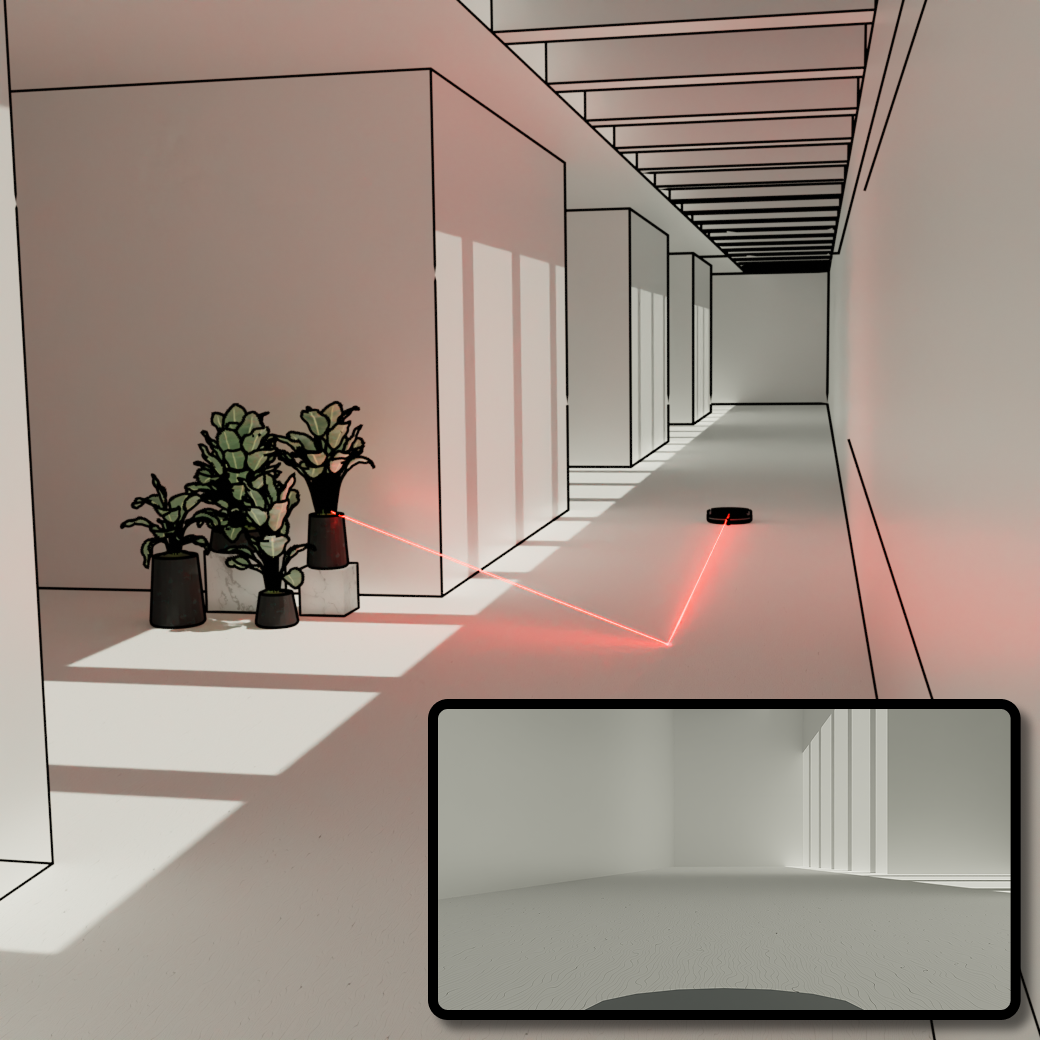

Even when an object is around a corner, tiny amounts of light still scatter off nearby surfaces like walls and floors. We showed that the LiDAR sensors already built into consumer devices can measure those faint reflections and use them to recover information about hidden objects.

What makes consumer LiDAR special?

Modern consumer LiDAR systems are surprisingly sophisticated. They can measure the arrival time of light with extremely high precision — down to tiny fractions of a nanosecond. That timing information contains much more information about the world than just visible depth mapping, and we’re beginning to unlock some of those capabilities computationally.

Can my phone already do this?

Today’s phones weren’t designed specifically for this application, and there are still significant limitations in range, resolution, and robustness. But what’s exciting is that the core sensing hardware already exists in many consumer devices, which means future systems could potentially build on that foundation with little to no modification of the hardware.

Why does this matter to ordinary people?

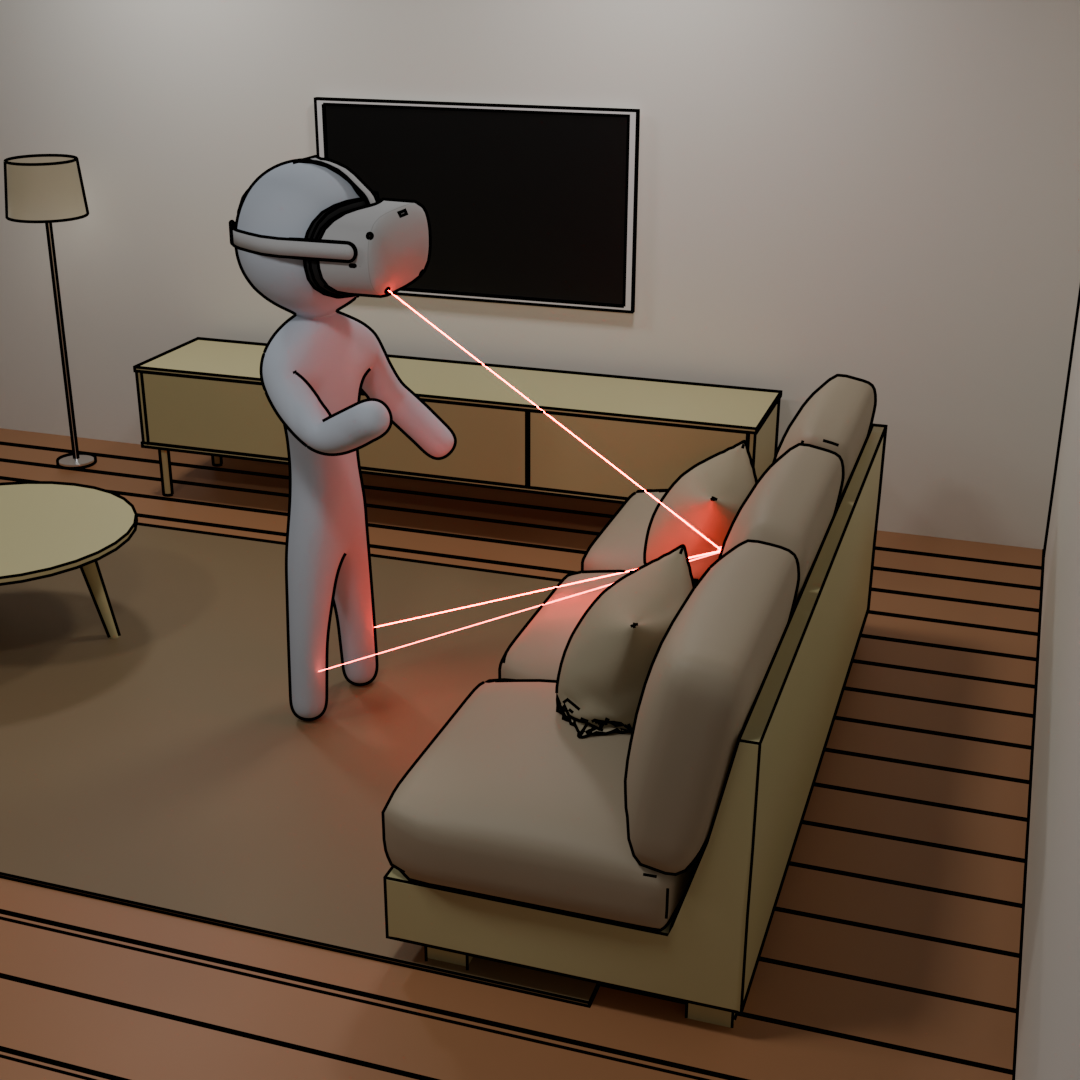

Technologies like cameras, GPS, and depth sensing all started as specialized research systems before becoming part of everyday life. Around-the-corner sensing could eventually improve safety, accessibility, robotics, wearable computing, and how devices understand the spaces around us. What’s exciting is that these capabilities may ultimately emerge on low-cost devices people already use every day.

How close is this to real-world deployment?

We’re still early, but what’s exciting is that this capability has moved from specialized laboratory equipment to consumer-grade sensors. That’s a major step toward real-world deployment, but there are still many important research challenges to solve before this becomes a robust commercial technology.

For example, current systems still struggle in extremely low-light and high-noise conditions, which are very common in real environments. Handling completely unknown motion — where both the camera and hidden objects are moving unpredictably — is another major challenge. There’s also a lot of exciting work ahead in combining these measurements with RGB cameras and other sensors, improving reconstruction quality and reliability, and integrating these systems into real-time robotic, automotive, and wearable platforms.

More broadly, we’re still learning how much information can be extracted from indirect light in everyday environments. I think this field is still at a very exciting stage where there are many open problems spanning physics, sensing hardware, computer vision, machine learning, and robotics. We are always looking for collaborators who are excited in working on these topics.